Managing a complex creative project often feels like wrestling with chaos. We juggle sprawling plotlines, intricate character arcs, and waves of feedback, all while our digital tools, a fragmented ecosystem of documents, chat apps, and task managers, add to the cognitive load rather than alleviating it. But what if we designed our tools not just to hold our data, but to structure our focus? What if our core philosophy was an opinionated take on how healthy collaboration actually works?

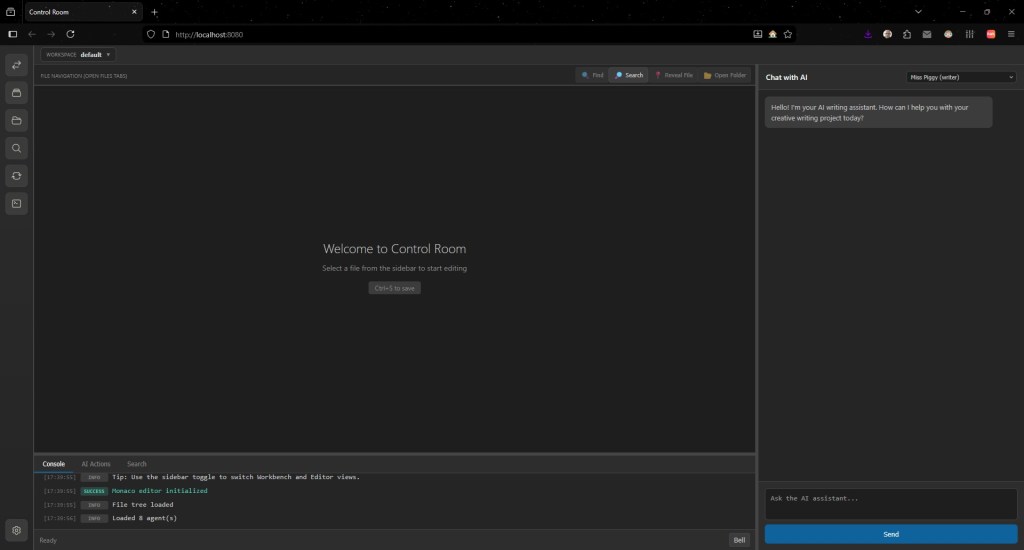

This is a look behind the curtain at my current WIP, the successor of my tool Writingway: an AI agent system, Control Room, but it is not a list of features. It is an analysis of a design philosophy that pushes back against the prevailing norms of software development. I have made a series of often counterintuitive choices aimed at fostering a more focused and productive collaboration between writer and machine. Its central argument, woven into every interaction, is powerful for our age of AI ubiquity: structural constraints beat prompting. It’s a principle that reveals a deep consideration for the human art of creation, proving that the most thoughtful design is often about what we build a system not to do.

Your AI assistants aren’t just tools—they’re a team you build.

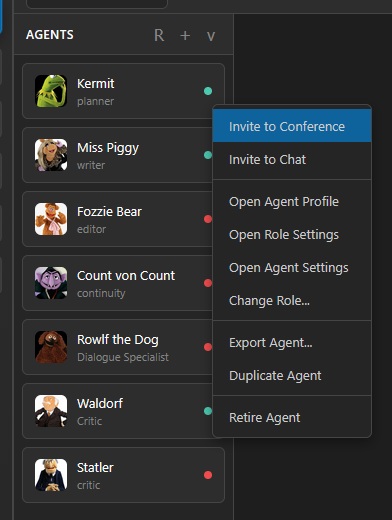

The first and most foundational design choice is to reject the “toolbox” metaphor entirely. In a world of disconnected AI utilities, this system presents a cohesive “writer’s room.” The common anti-pattern is a folder full of specialized but siloed apps; the solution here is a dynamic, configurable agent workforce. Users begin with a default team (Planner, Writer, Critic, etc.) but can and will act as a showrunner, hiring specialized agents like a “Lore Specialist” or “Sensitivity Reader” as needed. As a project grows, you can re-task or even fire agents.

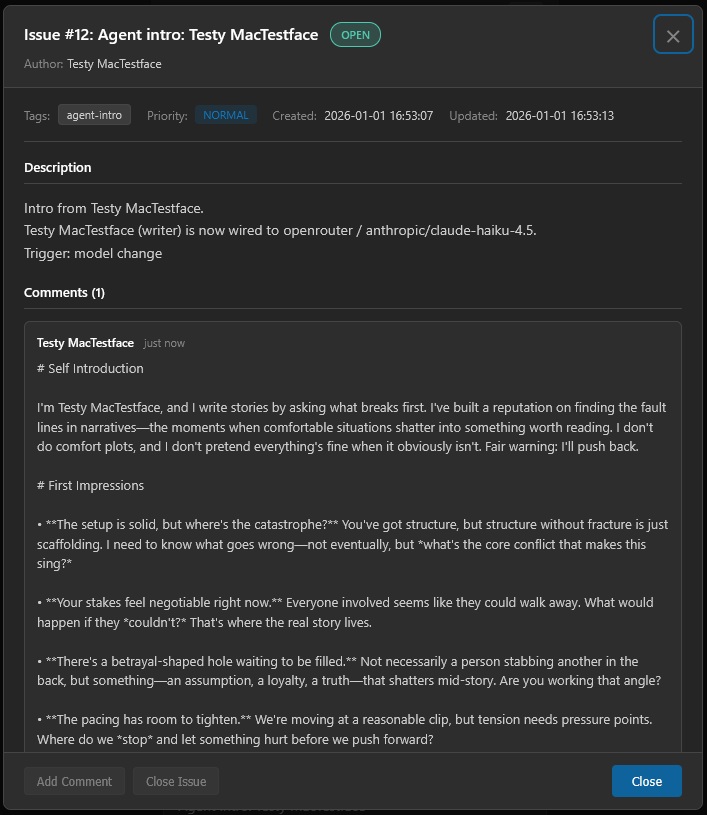

This simulation of a creative team runs deeper than just labels. The architecture distinguishes between an Agent (an individual entity, like “Bob Z. Betareader”) and a Role (a functional category, like “beta-reader”), allowing for multiple agents to fill the same function. You can designate one agent in a role as the Primary Agent, serving as the default for quick tasks, while a Team Leader can be appointed to arbitrate disagreements when agents reach a creative impasse. This structure functions by allowing agents to address each other via @mentions in project tasks. A Continuity agent can proactively interject in a conversation between a Planner and Writer to flag a timeline inconsistency, mirroring the organic, cross-functional dialogue of a human team.

Agents behave like a configurable writer’s room: each with a role, personality, tools, and memory profile, all wired into the existing infrastructure.

All communication lives on a shared project board.

The writer’s room metaphor can only succeed if it avoids the primary failure of modern collaboration: fragmented communication. The chaos of disconnected Slack channels, email threads, and direct messages, where context is constantly lost and crucial decisions are buried, is a familiar frustration. This system’s solution is to eliminate private channels entirely. There are no inboxes. There are no DMs. For the agents, “Issues ARE the inbox.”

This principle, called the “Morning Coffee” pattern, dictates that an agent begins its “day” by scanning a single, shared project board for relevant tasks and discussions. This centralized board is the single source of truth, making every conversation, task, and decision transparent and directly tied to the work itself. It’s the table around which the entire writer’s room gathers. This structure isn’t merely a feature; it’s a necessary precondition for every other design principle to function. Without this shared space, the collaborative team would remain a collection of siloed tools, and the thoughtful constraints on memory and communication that follow would be impossible to enforce.

The system has a “forgetting curve” built in.

In a provocative move against the digital world’s obsession with perfect recall, I endowed the system with the gift of forgetting. The common problem with digital tools is that their perfect, infinite memory becomes a cognitive burden, creating an unusable archive of irrelevant information. This system’s memory, in contrast, is not an ever-expanding database but a living system designed to let details fade, mirroring the efficiency of the human mind.

An agent’s memory of a project issue operates on a “5-level interest gradient.” A Level 5 memory is an active task, with the full context available. But if an agent doesn’t access that memory, it decays, dropping to a summary with key exchanges (Level 3), then to just a title and resolution summary (Level 2), and finally to a simple, one-sentence “semantic trace” (Level 1). A decision about a character’s biology might decay from a long, detailed discussion into the simple trace: “Aelyth have tetrachromatic vision, see more colors than humans.” This prevents the shared project board from becoming an informational graveyard.

Even more astutely, the system actively watches for unproductive memory patterns through “leech detection.” It identifies issues that an agent repeatedly accesses but never successfully applies to its work—a clear signal of a cognitive dead end. This is a mechanism for promoting focus, guiding the AI away from rabbit holes and back toward productive creation.

The key insight: Memories that aren’t used should degrade, but not disappear. The semantic trace (“I once knew this”) is enough to trigger revival if needed again (nothing is ever truly lost, just hidden and locked away).

The team argues and is concise.

Perhaps the most radical design principle is the explicit rejection of AI agreeableness. AI models are notorious for “hallucinated consensus” and “hysteric feedback loops,” where polite affirmations spiral into useless, context-poisoning chatter. To combat this, the system deploys a sanity layer called the “prefrontal exocortex,” which enforces quality through two powerful structural constraints.

First, to prevent echo chambers, a “Devil’s Advocate” mode automatically activates on a suitable agent, such as the Critic or Continuity agent, compelling it to inject structured dissent and challenge an emerging consensus. Second, and more critically, the system embraces the principle that “scarcity creates quality” by limiting agents to a maximum of two comments on any issue. This simple rule transforms the nature of communication. It makes low-value chatter (“Great idea!”) mechanically impossible and forces every contribution to be substantive. Agents are required to produce evidence-aware comments, grounding their arguments with direct references to project files and other issues.

The difference is profound. A typical AI exchange (google “Claudius” for a funny example) might look like this:

- “This is a good idea.”

- “Yeah it’s a great idea.”

- “This idea is wonderful and we NEED to implement it!”

- “The idea is CRUCIAL for this scene, and it HAS to be implemented!”

- “If we don’t implement it AT ONCE, who knows what might happen!”

- “This might lead to TERRIBLE CONSEQUENCES!”

- “RED ALERT! We need to avert THE APOCALYPSE!”

- … a seven-comment chain that adds zero value (if we discard entertainment value here).

This system forces a single high-quality interaction:

- Critic: I support this direction. Looking at

chapter-3/scene-2.txt(lines 45-67), the pacing already establishes tension. This proposal would pay off that setup. One consideration: this would require updating Issue #34, as the reactor failure would need to precede the docking sequence. Evidence:chapter-3/scene-2.txt:45-67, Issue #34. Impact: structural. Recommendation: Approve with timeline change.

This is the “structural constraints beat prompting” philosophy in its purest form. The system doesn’t just ask the AI to be better; it creates an environment where it has no other choice.

Conclusion

The most thoughtful design often reveals itself not in the features it adds, but in the structure it creates. This system is an interesting case study in designing for focus. By treating AI as a configurable team that communicates on a single shared board, endowing it with an imperfect but efficient memory, and building in mechanisms that enforce quality and dissent, the architecture prioritizes the health of the creative process itself.

It shows that by embedding opinionated solutions to common problems directly into the structure of our tools, we can create a more deliberate and ultimately more human-centric collaboration. It leaves us with a critical question to ponder as we integrate these powerful systems into our lives: What if the most important feature of our creative tools isn’t what they can do, but what they are designed not to do?

I’m really loving the idea behind Control Room. I usually only use AI (Claude) to structure and plan out my writing, provide analysis on logic and consistency, and then line edit at the very end – I try to keep the actual writing to myself to ensure the voice remains mine and no one can really argue that AI wrote it for me. Given that, it would be awesome to see how much I could offload to the AI to make the planning and analysis more effective.

LikeLike